Gui Yang, National Engineering Research Center for Advanced Polymer Processing Technology, The Key Laboratory of Material Processing and Mold of Ministry of Education, Zhengzhou University, Zhengzhou 450002, Henan, China. E-mail: guiyang@zzu.edu.cn

Xinyang He, National & Local Joint Engineering Research Center of Technical Fiber Composites for Safety and Healthy, School of Textile & Clothing, Nantong University, Nantong 226019, Jiangsu, China; Hai’an Institute of Advanced Textile, Nantong University, Nantong 226019, Jiangsu, China; Jiangsu Hongshun Synthetic Fiber Technology Co., Ltd. Nantong 226600, Jiangsu, China. E-mail: hexinyang@ntu.edu.cn

Abstract

Despite rapid progress in health monitoring, many smart wearable devices still function primarily as passive sensing-and-logging platforms. Their performance is constrained by fixed hardware configurations and cloud-centric analytics pipelines. As a result, they often fail to deliver real-time responses to user intent and rarely support closed-loop physical intervention. This perspective argues that enabling embodied intelligence in wearables requires three paradigm shifts. First, wearables should transition from closed, integrated hardware to open, modular computing architectures that can accommodate evolving on-device artificial intelligence (AI) demands. Second, cloud-dependent inference should be replaced, where appropriate, by ultra-low-latency edge intelligence to support millisecond-scale prediction and control. Third, devices should evolve from passive information feedback to active physical intervention supported by human-in-the-loop optimization. Together, these shifts may reshape the human–machine relationship by moving wearables from external tools toward digital partners that operate under explicit user intent and safety constraints.

Keywords

1. Introduction

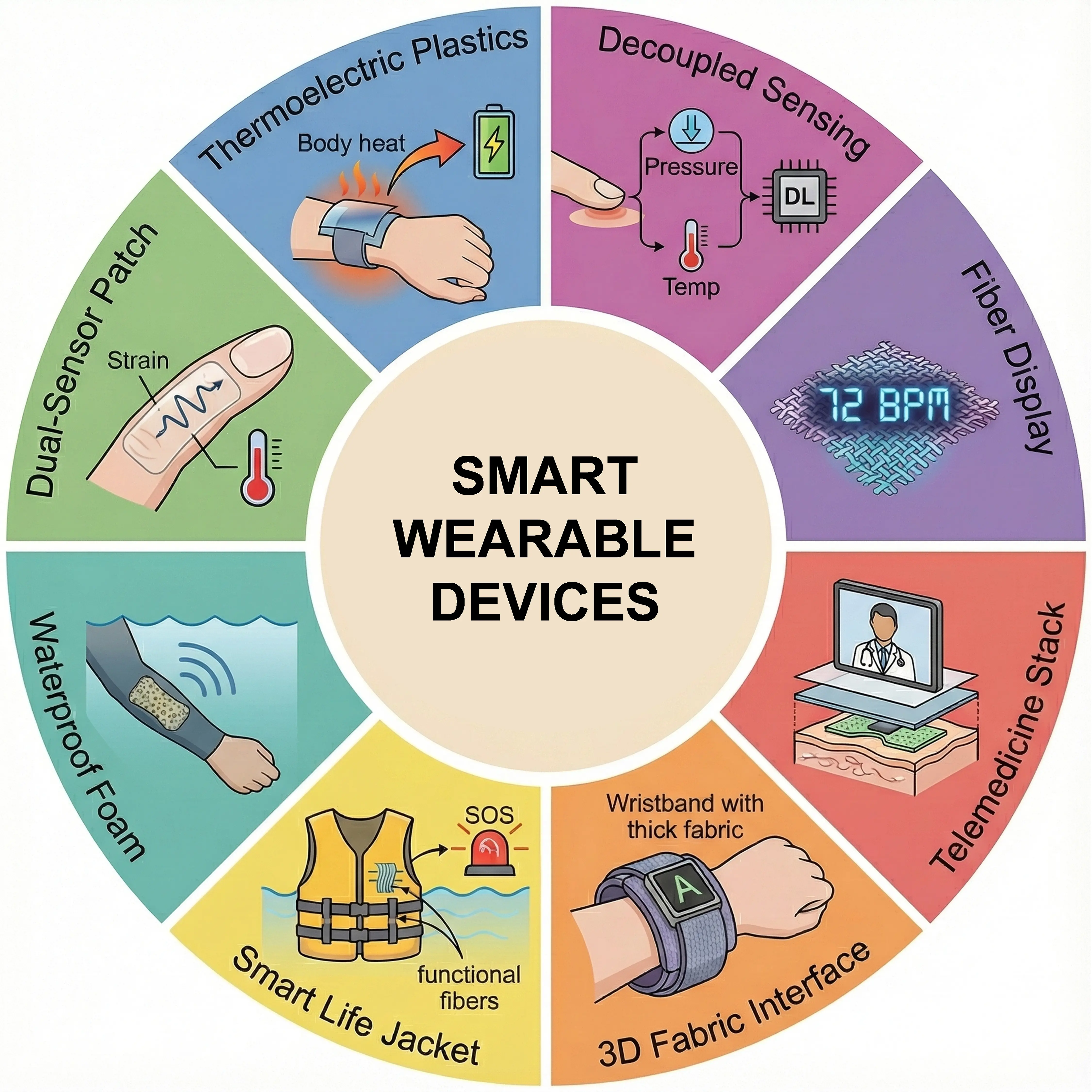

Smart wearable electronics serve as interfaces connecting biological systems to the digital world, and they continue to reshape how individuals perceive their bodies and environments[1]. With the convergence of the Internet of Things (IoT), artificial intelligence (AI), and flexible materials, wearable technology has advanced beyond single-function monitoring toward lightweight, self-powered, and increasingly integrated systems[2]. Current smart wearable systems are primarily structured around three components: sensing interfaces, energy harvesting modules, and human–machine interaction units. To summarize the latest advancements in this field, Figure 1 presents eight representative research achievements spanning material innovation to system-level applications. In the realm of advanced materials and multimodal sensing, researchers are dedicated to developing flexible interfaces capable of adapting to complex skin deformations. Wang et al.[3] developed multi-heterojunctioned plastics that significantly enhanced the energy conversion efficiency of flexible materials, providing a high-performance material foundation for skin-attachable electronics. Fu et al.[4] designed a chalcogenide glass-polymer film sensor that successfully achieved high-precision dual-mode detection of temperature and strain. Addressing demands for operation in complex environments, Yang et al.[5] developed a waterproof conductive fiber with a microcracked synergistic conductive layer, enabling stable and tunable wearable strain sensing under wet conditions.

Figure 1. State-of-the-art smart wearable devices enabling advanced sensing, energy harvesting, and interaction. Thermoelectric plastics for efficient body heat harvesting. Republished with permission from[3]. Dual-sensor patches for simultaneous strain and temperature monitoring. Republished with permission from[4]. Waterproof conductive fibers for robust wet-environment strain sensing[5]. Smart life jackets integrating functional fibers for drowning rescue[6]. 3D fabric interfaces providing breathable and washable energy solutions[7]. Telemedicine stacks for visual healthcare interaction. Republished with permission from[8]. Fiber displays enabling visual-digital synergies. Republished with permission from[9]. Decoupled sensing systems for deep learning-assisted human-machine interaction. Republished with permission from[10].

Regarding energy supply and self-powered systems, self-powered technologies based on environmental energy harvesting have become a research hotspot to resolve the limitations of traditional battery life. However, the efficiency and power density of current energy-harvesting modules are typically insufficient to sustain continuous on-device AI computation, and practical systems often require hybrid power strategies and stringent duty cycling. Compatibility with modular hardware and edge intelligence therefore depends on energy-aware scheduling, where sensing and inference are event-triggered or duty-cycled, and high-power actuation is reserved for brief, safety-critical windows. Zhang et al.[6] integrated a smart life jacket system with multi-fiber sensors, utilizing triboelectric nanogenerators to achieve active perception and energy self-sufficiency for drowning rescue. He et al.[7] reported a three-dimensional spacer fabric device that not only achieved a high breathability of 1,300 mm·s-1 but also efficiently harvested body heat via the thermoelectric effect. Combined with machine learning algorithms, this system achieved 100% accuracy in sign language recognition, exemplifying the fusion of wearable energy and computing. In terms of intelligent interaction and system integration, the introduction of deep learning and novel display technologies has endowed wearable devices with enhanced information processing and feedback capabilities. Li et al.[8] proposed a 3D stacked device based on electroluminescent display and TENG sensing, providing a visualized interactive interface for telemedicine. Yang et al.[9] developed a fiber electronic system realizing visual-digital synergistic interaction, propelling fabric electronics toward display applications. Furthermore, Chen et al.[10] constructed a decoupled sensing system combined with deep learning, achieving independent and precise resolution of pressure and temperature signals in complex human-machine interaction scenarios.

These advances demonstrate significant progress in the areas of sensing, energy harvesting, and human–machine interaction. However, despite these achievements, the dominant wearable paradigm remains largely limited to passive sensing and data logging[11]. Existing systems typically rely on cloud computing for post-processing, introducing latency that is not suitable for real-time tasks[12]. Moreover, general-purpose AI models are often unable to accommodate significant individual biomechanical differences[13]. More importantly, current devices lack closed-loop physical intervention capabilities, preventing them from providing timely assistance or protection when risks are detected[14]. Therefore, this Perspective proposes that next-generation smart wearable devices should evolve toward modular architectures, edge intelligence, and active intervention. These shifts offer a new perspective for realizing true “embodied intelligent symbiosis”.

2. Critical Paradigm Shifts

2.1 Shift to modular reconfigurable architecture

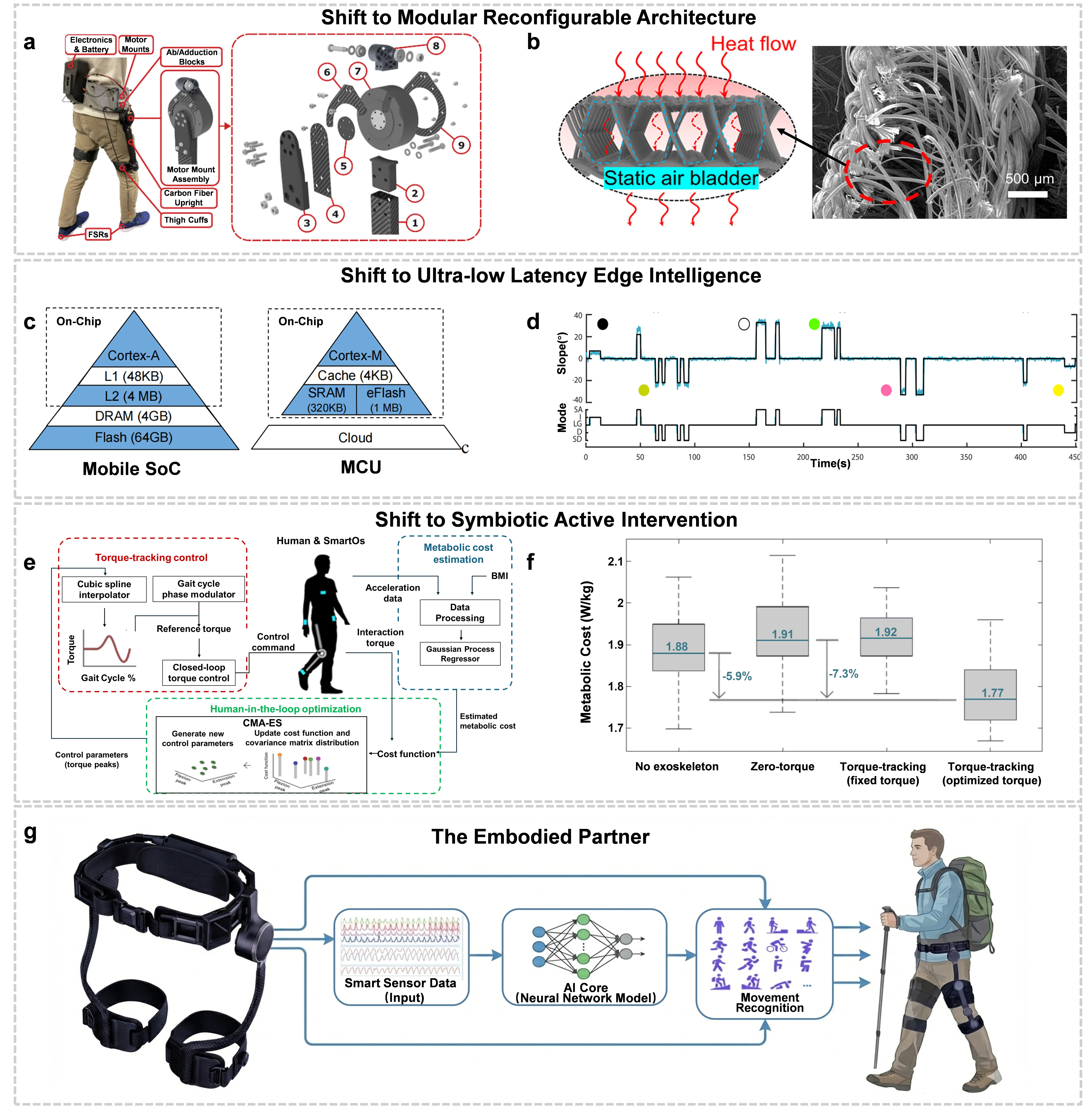

Traditional integrated wearable devices often employ a “one-size-fits-all” hardware design, where computational power and sensing capabilities are determined at the point of manufacture. However, the iteration speed of AI algorithms far exceeds the hardware update cycle, and this static architecture limits the intelligent upgrade of devices. A future approach involves an open modular architecture where the compute module, power unit, and sensor network are decoupled, allowing users to dynamically replace modules based on specific scenarios, such as high-intensity rehabilitation training or daily assisted walking (Figure 2a)[15]. A central bottleneck is the lack of robust, standardized interconnects across mechanical attachment, power delivery, and data links, together with the need for repeatable calibration and sealing reliability under sweat, bending, and long-term wear.

Figure 2. Critical paradigm shifts towards next-generation wearable intelligence. (a) Concept of open modular reconfigurable architecture allowing dynamic replacement of functional units[15]; (b) High-performance modular material basis using a 3D thermoelectric spacer fabric with static air pockets[7]; (c) Architecture comparison illustrating the deployment of TinyML on microcontrollers for edge inference[19]; (d) Real-time continuous locomotion mode recognition enabling ultra-low latency response to match human neural reaction times[18]; (e) Schematic of the symbiotic control protocol where the final output is a product of user intent and AI optimization. Republished with permission from[13]; (f) Experimental data showing significant metabolic cost reduction (~7%) during walking using human-in-the-loop optimization strategies[14]; (g) The Embodied Partner: A conceptual vision of a modular active exoskeleton integrating modular hardware, edge intelligence, and symbiotic intervention as the ultimate form of wearable intelligence.

The foundation for realizing this architecture lies in advanced material systems that support plug-and-play modular designs. These materials must offer both excellent mechanical properties and integrated functions of sensing, energy harvesting, and signal transmission. Recent research has demonstrated this potential. For example, a novel three-dimensional flexible thermoelectric fabric achieves high breathability and washability while maintaining stable signal output under compressive strain (Figure 2b). Such materials can also be coupled with machine learning algorithms, such as Support Vector Machines, to recognize complex gestures and physiological signals with high accuracy[7]. This modular approach, integrating advanced materials with intelligent algorithms, addresses comfort issues related to long-term wear and provides a stable foundation for future wearable devices. It allows hardware to evolve iteratively, similar to software updates[16,17].

2.2 Shift to ultra-low latency edge intelligence

In traditional processing paradigms, data are typically uploaded to the cloud for post-processing. While cloud computing power is substantial, communication latency (typically in the order of hundreds of milliseconds) is unsuitable for applications requiring real-time motion control[12]. To achieve seamless human-machine fusion, the key to the future lies in combining Edge AI with latency-free perception.

To ensure safety and fluid motion control, next-generation systems must prioritize sub-millisecond to millisecond-level end-to-end latency from sensing to actuation, depending on the task dynamics (Figure 2d)[18]. This latency budget should be explicitly decomposed into signal acquisition, preprocessing, on-device inference, and actuation updates, rather than aggregated into a single headline figure. As a practical reference, an end-to-end budget in the millisecond regime often requires sub-millisecond sensing and preprocessing, with on-device inference and control updates maintained within a few milliseconds depending on the actuator dynamics and safety margins. By deploying lightweight neural networks (TinyML) on microcontrollers at the edge, devices can perform millisecond-level prediction directly on-site (Figure 2c)[19]. Neuromorphic computing chips, which simulate the spiking discharge mechanisms of the human nervous system, enable real-time decoding of motion intent with low power consumption[20]. At present, neuromorphic platforms remain constrained by algorithmic maturity and throughput, which can limit their applicability to broad, high-bandwidth sensing and decision tasks in wearables. Additionally, algorithms should support online learning, enabling the system to build a “digital twin” model of the user during device operation. This allows continuous fine-tuning of parameters based on the user’s unique gait. To improve efficiency and generalizability, personalization can be implemented as lightweight adaptation on top of population priors, using brief calibration sessions and constrained continual updates that preserve safety and avoid overfitting to transient behaviors. This collaborative framework, where cloud-based training of general models complements edge-based execution of personalized inference, ensures devices function like an additional human neural system, achieving true zero-latency perception and decision-making[21,22].

2.3 Shift to symbiotic active intervention

The primary feedback mechanism for most current wearable devices is passive notification, such as vibrations or screen displays, which cannot directly reduce the physical load on the user. A crucial objective of future wearables is to establish a closed-loop sensing-decision-actuation pipeline that enables assistive or protective interventions when necessary. Future devices will not only be sensors but also actuators. Because actuation typically demands additional energy input, delivering reliable assistance under wearable power budgets remains a central engineering constraint, particularly for safety-critical torque or force outputs.

The ideal control logic should follow a “Symbiotic Protocol”, where the final output of the system is determined jointly by the user’s preset intent and the AI’s environmental optimization (Output = User Intent × AI Optimization) (Figure 2e,f)[13]. In this mode, the device can dynamically adjust its output according to environmental changes: for instance, providing torque in “assist mode” to reduce metabolic consumption, or providing resistance in “training mode” to enhance muscle strength[23]. This intervention is built upon a deep understanding of multimodal data (such as electromyography, strain, and temperature). Recent studies indicate that control strategies based on human-in-the-loop optimization can significantly reduce the metabolic cost of human walking by more than 7%[14]. From an application perspective, symbiotic active intervention is equally central to rehabilitation and rescue use cases, including gait rehabilitation, fall risk mitigation, and rapid assistance during emergencies. In these scenarios, the primary objective is not metabolic economy but reliable, context-aware actuation that prioritizes safety, stability, and task completion. However, active intervention also brings safety challenges; therefore, explainable AI decision logic (XAI) must be constructed to ensure that every physical intervention is transparent, safe, and consistent with user expectations[24-28].

The convergence of these paradigms enables the implementation of the “Embodied Partner” concept, as shown in Figure 2g. Addressing the limitations of static, cloud-dependent devices, this conceptual exoskeleton employs a modular architecture that supports dynamic hardware updates and environmental adaptability. Computationally, it relies on an embedded Edge AI Core to perform rapid, on-device inference, eliminating transmission latency and ensuring seamless synchronization with human movement. This real-time processing capability allows for symbiotic active torque assistance, physically augmenting human capabilities in complex environments. This integrated approach offers a practical pathway for transforming wearables into active physical assistants through hardware-algorithm co-design.

Acknowledgments

Gemini 3.1 Pro was used solely for language editing and improving clarity. The authors take full responsibility for the accuracy and scientific content of the article.

Authors contribution

Li C: Conceptualization, investigation, visualization, writing-original draft.

Zhang J: Investigation, validation, writing-review & editing.

Tian G: Conceptualization, methodology, supervision, writing-review & editing.

Yang G: Methodology, supervision, writing-review & editing.

He X: Conceptualization, resources, project administration, supervision, writing-review & editing.

Conflicts of interest

Xinyang He is a Youth Editorial Board Member of Smart Materials and Devices and is employed by Jiangsu Hongshun Synthetic Fiber Technology Co., Ltd. The other authors have no conflicts of interest to declare.

Ethical approval

Not applicable.

Consent to participate

Not applicable.

Consent for publication

Not applicable.

Availability of data and materials

Not applicable.

Funding

This work was supported by the National Key R & D Program of China (Grant No. 2022YFA1504002), and the National Natural Science Foundation of China (Grant No. 52522312 and No. 52373247).

Copyright

© The Author(s) 2026.

References

-

1. Sun T, Feng B, Huo J, Xiao Y, Wang W, Peng J, et al. Artificial intelligence meets flexible sensors: Emerging smart flexible sensing systems driven by machine learning and artificial synapses. Nano Micro Lett. 2023;16(1):14.[DOI]

-

3. Wang D, Ding J, Ma Y, Xu C, Li Z, Zhang X, et al. Multi-heterojunctioned plastics with high thermoelectric figure of merit. Nature. 2024;632(8025):528-535.[DOI]

-

5. Yang S, Yang W, Yin R, Liu H, Sun H, Pan C, et al. Waterproof conductive fiber with microcracked synergistic conductive layer for high-performance tunable wearable strain sensor. Chem Eng J. 2023;453:139716.[DOI]

-

6. Zhang Y, Li C, Wei C, Cheng R, Lv T, Wang J, et al. An intelligent self-powered life jacket system integrating multiple triboelectric fiber sensors for drowning rescue. InfoMat. 2024;6(5):e12534.[DOI]

-

7. He X, Shi XL, Wu X, Li C, Liu WD, Zhang H, et al. Three-dimensional flexible thermoelectric fabrics for smart wearables. Nat Commun. 2025;16:2523.[DOI]

-

8. Li Y, Lin Q, Sun T, Qin M, Yue W, Gao S. A perceptual and interactive integration strategy toward telemedicine healthcare based on electroluminescent display and triboelectric sensing 3D stacked device. Adv Funct Mater. 2024;34(40):2402356.[DOI]

-

9. Yang W, Gong W, Gu W, Liu Z, Hou C, Li Y, et al. Self-powered interactive fiber electronics with visual–digital synergies. Adv Mater. 2021;33(45):2104681.[DOI]

-

10. Chen Z, Liu S, Kang P, Wang Y, Liu H, Liu C, et al. Decoupled temperature–pressure sensing system for deep learning assisted human–machine interaction. Adv Funct Mater. 2024;34(52):2411688.[DOI]

-

11. Ray PP. A review on TinyML: State-of-the-art and prospects. J King Saud Univ Comput Inf Sci. 2022;34(4):1595-1623.[DOI]

-

13. Slade P, Kochenderfer MJ, Delp SL, Collins SH. Personalizing exoskeleton assistance while walking in the real world. Nature. 2022;610(7931):277-282.[DOI]

-

14. Monteiro S, Figueiredo J, Fonseca P, Vilas-Boas JP, Santos CP. Human-in-the-loop optimization of knee exoskeleton assistance for minimizing user’s metabolic and muscular effort. Sensors. 2024;24(11):3305.[DOI]

-

17. Ma Z, Huang Q, Xu Q, Zhuang Q, Zhao X, Yang Y, et al. Permeable superelastic liquid-metal fibre mat enables biocompatible and monolithic stretchable electronics. Nat Mater. 2021;20(6):859-868.[DOI]

-

19. Banbury C, Zhou C, Fedorov I, Matas R, Thakker U, Gope D, et al. Micronets: Neural network architectures for deploying tinyml applications on commodity microcontrollers. Proc Mach Learn Syst. 2021;3:517-532.[DOI]

-

20. Patil CS, Ghode SB, Kim J, Kamble GU, Kundale SS, Mannan A, et al. Neuromorphic devices for electronic skin applications. Mater Horiz. 2025;12(7):2045-2088.[DOI]

-

21. Laubenbacher R, Mehrad B, Shmulevich I, Trayanova N. Digital twins in medicine. Nat Comput Sci. 2024;4(3):184-191.[DOI]

-

24. Consortium TP, Amann J, Blasimme A, Vayena E, Frey D, Madai VI. Explainability for artificial intelligence in healthcare: A multidisciplinary perspective. BMC Med Inform Decis Mak. 2020;20:310.[DOI]

-

25. Abdelaal Y, Aupetit M, Baggag A, Al-Thani D. Exploring the applications of explainability in wearable data analytics: Systematic literature review. J Med Internet Res. 2024;26:e53863.[DOI]

-

26. Li S, Shao S, Ju L, Pan Y, Zhu N, Li J, et al. Ultra-sensitive flexible stretchable sensor based on bionic structure using graphene oxide and carboxylated multi-walled carbon nanotubes for wearable electronic skin. Results Phys. 2025;77:108441.[DOI]

-

27. Yang B, Zhu X, Peng C, Zhou L, Wang F, Liu Z, et al. Synchronized measurement of electromechanical responses of fabric strain sensors under large deformation. Smart Wearable Technol. 2025;1:A7.[DOI]

-

28. Yun G, Hu Z. Triaxial tactile sensing for next-gen robotics and wearable devices. Smart Mater Devices. 2025;1(2):202518.[DOI]

Copyright

© The Author(s) 2026. This is an Open Access article licensed under a Creative Commons Attribution 4.0 International License (https://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, sharing, adaptation, distribution and reproduction in any medium or format, for any purpose, even commercially, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

Publisher’s Note

Share And Cite