Abstract

Self++ is a conceptual design framework for human–Artificial Intelligence (AI) symbiosis in extended reality (XR) that preserves human authorship while still benefiting from increasingly capable AI agents. Because XR can shape both perceptual evidence and action, apparently ‘helpful’ assistance can drift into over-reliance, covert persuasion, and blurred responsibility. Self++ grounds interaction in two complementary theories: Self-Determination Theory (autonomy, competence, relatedness) and the Free Energy Principle (predictive stability under uncertainty). It operationalises these foundations through co-determination, treating the human and the AI as a coupled system that must keep intent and limits legible, tune support over time, and preserve the user’s right to endorse, contest, and override. These requirements are summarised as the co-determination principles (T.A.N.): Transparency, Adaptivity, and Negotiability. Self++ organises augmentation into three concurrently activatable overlays spanning sensorimotor competence support (Self: competence overlay), deliberative autonomy support (Self+: autonomy overlay), and social and long-horizon relatedness and purpose support (Self++: relatedness and purpose overlay). Across the overlays, it specifies nine role patterns (Tutor, Skill Builder, Coach; Choice Architect, Advisor, Agentic Worker; Contextual Interpreter, Social Facilitator, Purpose Amplifier) that can be implemented as interaction patterns, not personas. The contribution is a role-based conceptual map that generates testable design propositions for XR-AI systems that grow capability without replacing judgment, enabling symbiotic agency in work, learning, and social life and resilient human development.

Keywords

1. Introduction

Seen in a longer arc, the present moment is a new chapter in human cognitive extension. Early visions of human–computer integration already anticipated a deep mind–machine symbiosis. In 1960, Licklider described “man-computer symbiosis” as an interactive partnership where humans and computers complement each other’s strengths[1]. Shortly after (1962), Engelbart proposed augmenting human intellect to boost problem-solving through technology[2]. Later theories formalised this relationship: the extended mind hypothesis argued that artifacts can become literal parts of cognition[3], while distributed cognition emphasised that thinking often spans people, tools, and environments[4]. Empirical work supports parts of longstanding worries about cognitive erosion, including the “Google effect”[5], inflated perceived knowledge from internet access[6], and weakened spatial memory from heavy global position system (GPS) reliance[7]. At the same time, cognitive science and Human–Computer Interaction (HCI) stress that “thinking with things” can improve reasoning[8], and that distributing cognitive load can enable more complex problem-solving[9].

We are accelerating toward more autonomous productivity, driven by virtual agents and embodied robots. Toolchains, platforms, and organisational agent stacks are being optimised for throughput, reliability, and delegation, first under human instruction and, plausibly, under higher-level supervisory agents. This trajectory sharpens an old question: how do we gain the benefits of delegation without losing authorship? Much current work focuses on catastrophic risks, from extreme harm like misalignment to subtler manipulation and societal subordination[10,11]. Alongside these frontier concerns is a nearer failure mode: artificial intelligence

This acceleration represents a major expansion of the human cognitive niche. Evolutionary accounts suggest humans gained advantage through causal reasoning, tool-making, and cooperative action rather than biological arms races[13]. Humans externalised thinking into artifacts and social systems, expanding intelligence through culture. This tendency is captured by the notion that we are “natural-born cyborgs”[14] living in co-evolution with our technologies[15]. Today, the niche expands again through extended reality (XR) and AI. XR extends perception and presence in mixed and virtual environments, while AI externalises reasoning by perceiving and acting alongside us. The result is a tightly interwoven human–machine ecology in which cognitive strategies are

This expansion also carries costs. The complexity and volatility of an XR–AI ecosystem can create an “entropy challenge”: unpredictable stimuli and shifting agent behaviours that outstrip our capacity to maintain coherent models[15,16]. The mismatch can manifest as information overload, attentional fragmentation, and blurred agency, where users may be unsure where their intentions end and the system’s suggestions begin. These symptoms point to design failures in how autonomy, competence, and social connection are protected under delegation. Emerging phenomena make this gap visible, including split-attention demands in XR workflows[17], identity confusion as agents become more human-like[18,19], and misaligned persuasion where simulation success does not translate into real behaviour change[20]. An “AI loneliness trap” may also emerge, where convenient synthetic companionship gradually displaces human relationships for some users[21].

If AI is the latest extension of cognition, synergy is not automatic. Automation research has long catalogued challenges such as common ground, trust calibration, and complacency[22]. A large meta-analysis suggests that human–AI teams often fail to outperform the better of the human or AI alone[23,24], implying that effective teaming must be deliberately designed with interaction mechanisms that respect human cognition and shared agency. Recent work reinforces that the most accurate AI is not always the best

Self++ responds to this agency problem by grounding design in two complementary foundations: Self-Determination Theory (SDT; autonomy, competence, relatedness) and the Free Energy Principle (FEP; predictive stability under uncertainty). In simple terms, SDT specifies what must be preserved for flourishing, while FEP explains why the pressure intensifies as environments become more volatile and mediated. We operationalise these foundations through co-determination, allowing users the right to endorse, contest, and override assistance. We summarise these requirements as three co-determination principles (T.A.N.): Transparency, Adaptivity, and Negotiability.

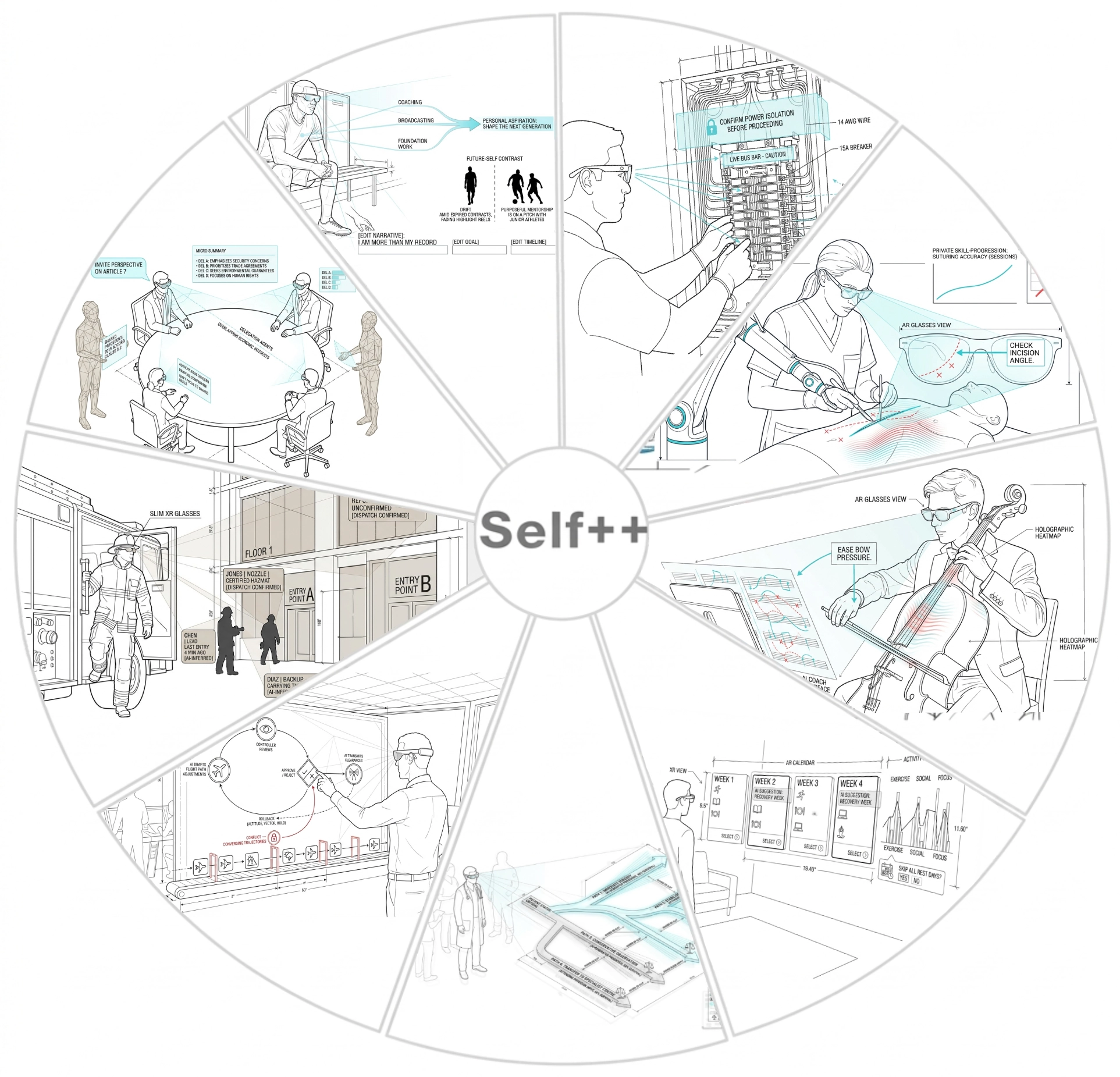

These pressures matter deeply to me as an educator and parent. Self++ is not meant to diminish AI’s utility or reject autonomy as a design direction. Instead, it offers a framework for human–AI systems that preserve the benefits of intelligent support while strengthening user agency, competence, and social integration. Self++ organises augmented agency into three concurrently activatable overlays and nine role patterns spanning sensorimotor support, deliberative decision support, and longer-horizon social and purpose alignment (Figure 1). We present Self++ as a theoretically grounded perspective rather than a validated specification. The framework is intended to organise an emerging design space, generate testable propositions, and provide conceptual tools for researchers and practitioners navigating the complex territory of human–AI collaboration in XR. Its value lies in making this territory more tractable for systematic investigation, not in prescribing final solutions. Across all role patterns, T.A.N. functions as a practical safeguard, so uncertainty regulation supports human development rather than bypassing it.

Figure 1. The nine Self++ role patterns organised across three concurrently activatable overlays, with co-determination principles (T.A.N.) scaling in strength with overlay scope and initiative. Overlay 1 (Self): Competence support. R1, Tutor: reduces novice uncertainty through a safe, learnable corridor (e.g., a trainee electrician receives anchored directional arrows, step gating, and ghosted hand exemplars through XR glasses while working on a residential electrical panel). R2, Skill Builder: calibrates and generalises skill through variability and augmented feedback (e.g., a training doctor receives real-time motion traces and a holographic accuracy heatmap overlaid onto a practice mannequin during a surgical procedure). R3, Coach: builds robustness under stress and supports self-correction (e.g., a cellist receives intonation feedback, fingerboard pressure heatmaps, and metacognitive prompts during a live performance, with social comparison replaced by private progression tracking). Overlay 2 (Self+): Autonomy support. R4, Choice Architect: shapes the decision context while preserving authorship (e.g., a person views a floating AR monthly calendar where recovery weeks are gently highlighted and a friction gate requests confirmation before overriding rest days). R5, Advisor: externalises deliberation by making counterfactuals and trade-offs inspectable (e.g., an ER doctor sees a branching holographic decision tree with uncertainty bands, survival-confidence estimates, and provenance badges distinguishing AI prognosis from attending physician input). R6, Agentic Worker: executes delegated tasks under a proposal-approval loop with rollback (e.g., an air traffic control shift manager oversees an AI-drafted routing queue where conflict items are flagged and rerouted back for manual handling, with any clearance reversible before transmission). Overlay 3 (Self++): Relatedness and purpose support. R7, Contextual Interpreter: makes identity, norms, and downstream impacts legible to reduce social surprise (e.g., a firefighter arriving at an incident sees AR-labelled crew roles, building entry points, and provenance badges distinguishing dispatch-confirmed from AI-inferred information). R8, Social Facilitator: improves human-to-human coordination and repair (e.g., diplomats at a round-table negotiation receive personalised, opt-in AR overlays including speaking-time balance, perspective-invitation prompts, and neutral micro-summaries of each delegation’s position, while embodied virtual agents surface shared precedents as common ground). R9, Purpose Amplifier: supports long-horizon value coherence by making future trajectories legible and editable (e.g., a retiring athlete views a holographic value-map converging personal strengths toward an aspiration, with a future-self contrast between drift and purposeful mentorship and editable identity-narrative fields).

2. Background

2.1 Theoretical foundations: Self-determination and free energy

As noted in the Introduction, the emerging XR–AI ecology raises the stakes for supportive AI design. Self++ is grounded in two complementary pillars: SDT[32] and the FEP[16]. Together, they explain why autonomy, competence, and relatedness matter under intelligent mediation, and how systems might support them without eroding agency. SDT identifies three basic psychological needs (autonomy, competence, and relatedness) as essential for motivation and well-being[32]. Autonomy is volitional, self-endorsed action; competence is efficacy and skill growth; relatedness is connection and belonging. When these needs are supported, people show stronger intrinsic motivation, learning, and well-being; when they are chronically frustrated, disengagement and poorer performance follow.

The Free Energy Principle complements SDT by explaining why these needs become harder to protect as environments grow more complex. Predictive processing accounts formalise perception and action as approximate Bayesian inference aimed at minimising surprise[33]. Friston’s FEP generalises this: biological systems maintain integrity by minimising free energy, closely related to long-run prediction error[16]. The brain updates internal models to keep sensory input within expected bounds; as environments become more volatile, the computational and attentional cost of this stabilisation rises. Because human predictive models evolved for relatively stable ecologies, they can be mismatched to fast-changing, algorithmically mediated XR, producing sustained prediction errors experienced as stress, confusion, or overload[15].

Self++ bridges SDT and FEP by treating autonomy, competence, and relatedness as stabilising conditions for predictive cognition: competence improves anticipatory control, autonomy protects against externally imposed goals that conflict with internal priors, and relatedness offloads uncertainty onto shared social models.

To operationalise these needs in interaction design, we propose co-determination. Rather than command-following tools or unilateral autonomy, codetermination treats human and AI as a coupled system that negotiates control to preserve psychological stability and minimise prediction error. We summarise this stance as three co-determination principles (T.A.N.):

• Transparency: From an FEP perspective, the system should minimise “hidden states.” If an agent’s reasoning or uncertainty is opaque, users cannot predict its behaviour, increasing anxiety and miscalibrated trust. Transparency supports accurate user mental models[33].

• Adaptivity: From an SDT perspective, support should track the user’s changing competence and relatedness conditions. Static assistance becomes either intrusive (thwarting autonomy) or insufficient (thwarting competence) or socially mistuned as multi-party interaction evolves. Adaptivity provides scaffolding that intensifies or fades, or reconfigures to match learning, context, and the changing dynamics of social coordination[34].

• Negotiability: Rooted in autonomy, users must be able to endorse, decline, or override system actions. Without negotiation or reversal, users lose authorship and volition[32].

2.2 Philosophical and cultural influence

SDT and FEP explain how humans sustain motivation and stability under uncertainty, but they leave a prior normative question open: what kinds of selves are being shaped as perception, action, and social interaction become increasingly mediated by intelligent systems? This subsection adds a philosophical and cultural framing for why agency, interpretation, and social embeddedness must remain central and why T.A.N. becomes an ethical requirement.

Across many traditions, selfhood is relational and enacted through conditions rather than fixed or self-contained. In Buddhist philosophy, the self is an impermanent process (anattā) arising through interdependence[35]. Māori ontology similarly foregrounds relational identity through whakapapa and frames well-being as sustained through balanced relationships among people, communities, and environment[36]. Cross-cultural psychology likewise distinguishes relational and individual-centred models of self, showing that meaning, obligation, and autonomy are socially situated[37]. These views do not reject autonomy; they recast it as accountable and context-sensitive.

Cognitive science offers a parallel account. Embodied and enactive approaches argue that sense-making emerges through ongoing organism–environment coupling, not detached internal reconstruction[38-40]. The extended mind thesis similarly holds that cognition can span brain, body, artefacts, and social structures[3]. If selves are enacted through tools and relationships, AI is not just an external utility; it helps shape the conditions through which identity and experience are repeatedly constructed. Self++, therefore, treats the social environment as a functional substrate for agency and understanding, not an optional dimension.

A further implication of this relational stance is that what counts as autonomy, competence, and relatedness, and how they are weighted, is not culturally uniform. Cross-cultural psychology has long shown that the boundaries of the autonomous self, the role of obligation in competent action, and the forms of belonging that sustain well-being differ substantially across individual-centred and relational self-construals[37]. Indigenous frameworks such as Māori models of relational health foreground collective accountability and intergenerational connection as conditions for flourishing, not merely as a context for individual choice[36]. Buddhist accounts treat selfhood as processual and interdependent, making the very notion of a bounded “autonomous agent” a convention rather than a ground truth[35]. These differences are not peripheral to Self++; they are a primary reason why the framework adopts a procedural rather than substantive ethical stance. Self++ does not prescribe which values are correct or which model of selfhood is authoritative. Instead, it specifies interactional conditions (transparency, adaptivity, and negotiability) under which users and communities can recognise, reflect on, and act from their own endorsed commitments, whatever those commitments may be. T.A.N. is designed for moral and cultural plurality: transparency makes influence visible so it can be evaluated against local norms; adaptivity prevents the system from freezing around a single cultural default; and negotiability gives individuals and groups the power to contest, reconfigure, or refuse what the system surfaces and how. This procedural stance does not claim neutrality, because choosing what to make legible is itself a normative act, but it does ensure that such choices remain inspectable and revisable rather than hard-coded.

This relational stance is especially relevant for AI design when read alongside Dependent Origination (pratītyasamutpāda) and predictive processing. Dependent Origination holds that experience arises through interdependent causes and conditions. Predictive processing makes a structurally similar claim: perception is generated by hierarchical generative models that predict sensory input, so experience depends on the negotiation between signals and learned expectations[16,33,41]. Prior work notes resonances between Buddhist accounts of interdependent experience and predictive approaches[42]. Building on this, we map Dependent Origination to inferential perception: ignorance reflects model mismatch; formations shape priors; sense bases/contact sample evidence; and craving/clinging reflects the drive to resolve uncertainty, potentially hardening priors. The upshot is that perceptual qualities (e.g., a virtual object’s apparent “redness”), or qualia, are not intrinsic to stimuli, but emerge from relational conditions spanning input and interpretation.

A key ethical implication follows: if mediation conditions experience and identity, those conditions must be legible and adjustable. Predictive accounts and the FEP formalise this dependency: perception and action reflect ongoing model–evidence negotiation, and uncertainty minimisation can become maladaptive when systems push users toward premature closure, rigid priors, or habitual over-reliance[43,44]. The design question is therefore normative, not merely technical: XR and AI reshape the informational and social conditions that guide interpretation, attention, and obligation, thereby shaping the self that is enacted over time. SDT specifies acceptable direction for this influence: assistance should expand autonomy (authorship), build competence (capability, not substitution), and sustain relatedness (trust and belonging)[45,46].

The interactional requirement of co-determination is what makes such conditioning ethically tractable, and T.A.N. can be read as

• Transparency as Insight: Because mediation shapes experience, intent, bias, and uncertainty must be visible. Transparency clarifies what is being altered, why, and with what confidence, enabling trust calibration[33].

• Adaptivity as Impermanence: Users develop; support must change with them. Adaptivity tunes (and fades) assistance as goals, context, and competence evolve, avoiding stale assumptions and dependency[47].

• Negotiability as Volitional Action: Users must be able to consent, contest, and override. Without meaningful veto and reversal, systems displace authorship and moral responsibility[26,45].

Together, this framing shows why T.A.N. is an ethical requirement, not merely a “trust mechanism”: mediated systems must be transparent, adaptive, and negotiable so uncertainty is regulated with users rather than for them.

The ethics of manipulation literature provides a complementary negative argument for these requirements. Philosophical accounts identify three main characterisations of manipulative influence: bypassing rational deliberation, trickery (inducing faulty mental states), and pressure (non-coercive but difficult-to-resist influence)[48]. Manipulation is widely held to undermine the validity of consent[49] and to “pervert the way that person reaches decisions, forms preferences, or adopts goals”[50]. More recent work characterises it as a hidden influence that targets cannot easily become aware of[51]. These characterisations are directly relevant to XR–AI systems, where perceptual mediation, personalised nudging, and delegated action all create conditions under which influence can become covert, difficult to resist, or substitutive of the user’s own reasoning. T.A.N. can be read as a systematic defence against all three forms. Transparency prevents trickery by making the system’s intent, reasoning, and uncertainty legible, so users cannot be induced into faulty beliefs about what is being influenced or why. Negotiability prevents pressure by ensuring the user always retains a viable exit; consent, override, and revocability eliminate the “awkward and difficult to resist” condition that characterises manipulative pressure[49]. Adaptivity prevents the subtlest form, bypassing rational deliberation, by ensuring that support engages and progressively strengthens the user’s own deliberative capacities rather than substituting for them; a system that never fades its scaffolding effectively outsources rational deliberation, and over time, such functional outsourcing becomes indistinguishable from bypassing it. Under these conditions, the system’s influence operates not by bypassing or subverting rational deliberation, but by scaffolding it, providing the informational and attentional conditions under which the user can deliberate more effectively while retaining full authorship over the resulting decision. In this reading, co-determination is not merely non-manipulative influence; it is structurally anti-manipulative, because it preserves and strengthens the very deliberative processes that manipulation subverts.

2.3 Extended reality as a perceptual filter: Dependent origination and predictive control

XR, encompassing AR, mixed reality (MR), and VR, is a technological frontier for modulating human perception. Recent work frames XR systems as technologies that can modulate the incoming light field itself rather than merely overlay virtual content, highlighting that XR operates as a perceptual filter acting prior to conscious interpretation[52]. Beyond addition, XR enables the subtraction or alteration of sensory input through techniques such as Diminished Reality[53], allowing aspects of the environment to be suppressed or transformed. Together, these capabilities constitute a form of mediated reality in which XR actively filters perceptual evidence rather than passively displaying information. By selectively amplifying, attenuating, or removing stimuli, XR systems shape what users attend to and how they interpret their surroundings. Perceptual filtering should therefore not be treated as a neutral presentation choice, but as an intervention that warrants disclosure of what is being altered and a user-legible rationale for why. This perspective also motivates XR as a systematic testbed for human–AI interaction research. Wienrich and Latoschik[54] propose an XR–AI continuum and “eXtended AI” arguing that XR can be used to prototype and study prospective AI embodiments and interfaces in controlled, high-fidelity contexts before deployment.

In practice, XR-mediated filtering can be applied in both constructive and protective ways. Constructively, XR can foreground

• Transparency in Perception: The system must disclose how it is filtering reality. If an XR system suppresses visual noise (e.g., removing ads or clutter[60]), users must be aware that information is being hidden and why. Perceptual transparency prevents users from mistaking a curated evidential stream for objective reality.

• Adaptivity in Scaffolding: Perceptual enhancements, such as highlighting task-relevant cues[55], should not become fixed or miscalibrated supports. True adaptivity implies that as a user learns to notice patterns (increasing perceptual competence), highlights can fade or re-target, transferring predictive load back to the user while preserving support when conditions change.

• Negotiability of Reality: Users must have the power to define and revise their perceptual boundaries. Whether it is a therapeutic application modulating anxiety triggers or exposure in Post-Traumatic Stress Disorder (PTSD) treatment[61], or a productivity tool filtering distractions, users should be able to inspect, override, and revert filtering on demand, including simple “show me what was removed” controls.

First, XR can function as a perceptual enhancer to reduce surprise by providing timely, task-relevant cues that make situations more predictable. If perception is, as Andy Clark suggests, a kind of “controlled hallucination” constrained by sensory feedback, then XR can be understood as an externalised intervention in the evidence that stabilises perceptual inference[62]. By enhancing signal quality or suppressing noise, XR systems shape the feedback that constrains expectations, making environments more predictable and cognitively manageable. Examples include AR navigation overlays that reduce wayfinding ambiguity[55], military helmet displays that stabilise situational awareness in fast-changing environments[63], and surgical AR systems that integrate imaging data directly into the operative field[64]. However, cueing can also become static or miscalibrated if it is not designed to adapt as competence develops. Adaptivity, therefore, implies scaffolding that can be intensified, faded, or re-targeted as user skill and context change, transferring predictive load back to the user where appropriate while preserving support when conditions change.

Conversely, XR can be used as a perceptual filter to minimise surprise or stress by attenuating extraneous or harmful inputs. The

These ethical requirements become even clearer in fully synthetic VR. By presenting a largely constructed sensorium, VR allows systematic manipulation of the relationship between expectation and sensation, making the role of prior beliefs in shaping experience explicit. Classic embodiment illusions, such as virtual limb or full-body ownership, arise when visual and sensorimotor contingencies align with the brain’s expectations, leading users to experience virtual bodies as their own[66]. The resulting sense of presence, the feeling of “being there”, can be understood as successful perceptual inference that the virtual world is sufficiently real to act within[67,68]. Importantly, compelling experience depends not only on rendering fidelity but also on behavioural and narrative coherence, because incoherent cues can collapse plausibility even in highly immersive systems[69]. This implies a transparency obligation that goes beyond “what was rendered”: systems should help users distinguish evidential cues from narrative framing, and support stepping out of persuasive framing when desired.

Empirical studies show that controlled manipulation of perceptual evidence can yield lasting changes in internal models.

Taken together, these examples show XR acting as both a perceptual enhancer and filter, enabling direct intervention in the inferential processes that generate perception by adding, removing, or restructuring sensory evidence. Such mediation parallels operant conditioning, where stimulus presence or removal guides learning and behaviour[72], a mechanism already leveraged in XR design to intentionally engage or disengage users[73]. To avoid drifting from support into behavioural control, reinforcement intent should be explicit, auditable and user-configurable in high-stakes contexts.

XR thereby makes tangible the dependent-origination insight that experience is conditioned, while predictive processing and FEP explain how altered evidence reshapes inference over time[42,44]. Recent AR work also operationalises SDT directly by testing how adaptive assistance shifts perceived autonomy: in AR-assisted construction assembly, low-agency control reduced workload but also reduced perceived autonomy, highlighting the agency trade-off that Self++ is designed to manage[74]. Co-determination then specifies the interactional obligation for XR perceptual filtering: because XR can alter the evidential conditions of experience, users should be able to recognise what has been amplified, attenuated, or removed, why that regulation is occurring, and how to inspect, revise, or reverse it. In this way, perceptual support can remain aligned with autonomy, competence, and relatedness rather than becoming a covert form of behavioural control.

2.4 Human–AI interaction and teaming in XR

The preceding sections framed XR as a mechanism for intervening on the evidential conditions of experience (through perceptual filtering), and situated Self++ within a broader philosophical view in which experience and identity are enacted through relational conditions. When we move from perception to action, the locus of risk and opportunity shifts: the AI is no longer merely shaping what is seen or attended to, but increasingly participates in goal selection, planning, and execution. This transition places Self++ within Human–Agent Teaming (HAT) or Human–AI Teams (HATs), which studies how humans and autonomous systems coordinate to achieve shared goals[1,75,76]. In XR and metaverse-like settings, teaming is not only informational but embodied and situated: coordination unfolds through shared spatial context, sensorimotor coupling, and the ongoing regulation of cognitive load and uncertainty.

A central obstacle for effective teaming is the user’s difficulty in forming accurate mental models of an agent’s state and reasoning, a challenge Norman characterises as the “gulf of evaluation”[77]. In XR, this gulf can widen: immersive presentation may increase perceived immediacy and credibility, while the agent’s internal uncertainty, constraints, and operating assumptions remain hidden. This is precisely where the interactional stance of co-determination becomes necessary. Rather than assuming fixed tool use or unilateral automation, co-determination treats human and agent as joint participants in a coupled system, requiring that the agent’s intent, boundaries, and uncertainty be legible enough for the user to retain volitional control. Users also carry expectations about what a “good” AI teammate should be[76] shows that people often expect AI partners to behave with human-like reliability, cooperativeness, and contextual sensitivity, and mismatches between these expectations and actual system behaviour can undermine trust and coordination. These expectation dynamics strengthen the case for co-determination as a stabilising baseline: the system must help users calibrate what the agent can and cannot do, rather than letting anthropomorphic assumptions silently drive reliance. Building on this trajectory, cognitive externalisation is now evolving into adaptive agent teammates, where calibrated trust shapes effective interaction and HAT outcomes[78].

In this practical domain of teaming, the co-determination principles (T.A.N.) must be implemented as specific interaction mechanisms rather than treated as abstract ethical principles:

• Transparency for bridging the gulf of evaluation: The agent should make its internal state legible enough for users to form accurate mental models, including what it is optimising for, what it believes, and where uncertainty or constraints apply. This reduces evaluation gaps and supports trust calibration[19,24,77].

• Adaptivity for dynamic allocation of initiative: Effective teammates do not behave identically regardless of context. Agents should adjust initiative, timing, and level of autonomy as user confidence, workload, and task conditions change, supporting decision outcomes without overwhelming or bypassing the user[56].

• Negotiability for consensual delegation and recovery: As agents become more capable, the risk of automation bias and loss of control increases. Users should be able to consent to actions, revise autonomy levels (e.g., “help me do this” versus “do this for me”), and override or undo decisions, preserving authorship and accountability[79].

General human–AI interaction guidance reinforces these requirements. Established guidelines emphasise making clear why the system acted, supporting efficient correction, and enabling undo and refinement[47]. In XR, where the system can shape both evidence and action, such principles are not cosmetic: they protect autonomy and competence by reducing surprise, supporting trust calibration, and preventing opaque shifts in control. Recent empirical work on team dynamics in human–AI collaboration further emphasises that teaming outcomes depend on interaction quality, affecting confidence, satisfaction, and accountability[24]. From a Self++ perspective, these are not merely usability metrics; they indicate whether an agent supports or frustrates SDT needs, and whether the coupled system converges towards stable, low-surprise coordination.

This interactional framing is consistent with XR-specific work on explanation and intelligibility. The XAIR framework[80] for explainable AI in AR argues that systems should generate explanations with AI outcomes and keep them accessible to support user agency, while using manual, user-triggered delivery as the default due to limited cognitive capacity in AR. XAIR further recommends that automatic, just-in-time explanations be reserved for constrained cases (e.g., surprise or confusion, unfamiliar outcomes, or model uncertainty) and only when the user has enough capacity to attend to them. Beyond timing, XAIR emphasises end-user configuration and a longer-term user-in-the-loop co-learning process, where systems adapt to users while users’ understanding and AI literacy evolve. In HAT terms, these design commitments instantiate the co-determination principles (T.A.N.): explanation access and state-legibility as Transparency, timing and initiative control as Adaptivity, and user-trigger, configuration, and reversibility as Negotiability.

Recent XR-specific HAT work further illustrates how embodied context changes the nature of coordination. Zhang et al.’s “Virtual Triplets” framework[18] analyses dynamics between the human, the virtual agent, and the physical task across synchronous and asynchronous settings. Successful assistance requires sensitivity to physical constraints, task progress, and translation between digital instruction and physical execution, aligning with the Competence overlay of Self++: the agent’s role is not to replace skill, but to scaffold effective action[34]. XR training research demonstrates this scaffolding role in practice. HAT Swapping[19] explores how virtual agents can act as stand-ins for absent human instructors, enabling guidance and feedback to persist across time and personnel while preserving the structure of collaborative training. AVAGENT[81] similarly shows how AI-powered virtual avatars can bridge asynchronous communication by capturing, transforming, and re-presenting human intent and context across time, extending HAT beyond real-time copresence into persistent coordination in XR. Together, these systems highlight both the promise and responsibility of XR agents: they can reduce uncertainty and support skill acquisition, but only if guidance remains transparent, appropriately timed, and adjustable to the learner’s evolving competence.

As agents become more capable, the design challenge intensifies. Multimodal foundation models enable systems that can perceive and act across vision, audio, language, and contextual signals, supporting increasingly high-level delegation[82]. However, increased capability increases the risk of misalignment and opacity, especially when the user cannot inspect the agent’s evolving beliefs or intentions. Work on transparency for modern AI systems emphasises interactive scrutability, user education, and attention to

These issues are not unique to XR, and lessons from human–AI co-creation generalise. Studies of collaborative writing with language models highlight recurring problems of trust calibration, user control, and authorship, even in ostensibly low-stakes tasks[85]. Recent work on agency in large language model (LLM)-infused tools similarly suggests that preserving authorship depends on making suggestions legible and easy to veto, so that assistance remains subordinate to the user’s intent rather than silently steering outcomes[86]. These findings map naturally onto the Autonomy overlay of Self++ and provide concrete interaction criteria for

Finally, the social dimension of teaming is essential, particularly for the Relatedness overlay of Self++. Triadic human-agent dynamics show that agents can mediate human-human collaboration, influencing how people coordinate and communicate with one

Taken together, HAT in XR offers the interactional mechanisms through which Self++ can be realised across the three overlays: competence, autonomy, and relatedness. XR can reorganise sensory evidence and reduce uncertainty, but as AI shifts from filter to collaborator, the conditions for healthy regulation of uncertainty become fundamentally interactional. Co-determination provides the bridge from the cognitive and philosophical foundations to concrete HAT practice: by prioritising the co-determination principles (T.A.N.), XR agents can scaffold skill, preserve volitional control, and strengthen social embeddedness, rather than causing relational displacement.

3. The Self++ Architecture: Three Overlays of Augmented Agency

Self++ organises human–AI coupling into three concurrently activatable overlays (Self, Self+, Self++), forming an architecture of augmented agency (Figure 1). Each overlay targets a different temporal and functional scale of free-energy minimisation, corresponding to nested timescales of adaptation and echoing “nested learning” in AI[92]. The naming (Self, Self+, Self++) does not imply separate selves, but an expanding scope of agency support: from here-and-now action to deliberation and policy formation, to social embeddedness.

Importantly, Self++ does not assume a strict pipeline in which Overlay 1 must finish before Overlay 2 or Overlay 3 begins. In realistic settings (training, teamwork, community participation), competence-building, autonomy exercise, and relatedness-support often

A clarification is important here: the temporal-horizon labels, short-, intermediate-, and long-term horizons (Table 1), denote where each overlay’s design commitments are primarily anchored, not where they are exclusively confined. A Tutor interaction may unfold in seconds to minutes per episode, while a tutoring relationship persists for months; what anchors the Tutor role at the sensorimotor timescale is that its key design variables, such as cue timing, step gating, and attention regulation, are specified and evaluated at that temporal grain. Conversely, a Social Facilitator primarily operates at the relational timescale while still needing to respond in real time to conversational dynamics. The overlay labels, therefore, indicate the primary design horizon for each set of role patterns, not a boundary on when they may be active.

| Lvl | Role | Role objective | Example XR-AI behaviours | Transparent | Adaptive | Negotiable |

| Overlay 1 (Self): Competence support (short-horizon) | ||||||

| R1 | Tutor | Reduce novice uncertainty; establish safe learnable corridor | Anchored arrows and ghosted exemplars with step gating; clutter suppression; completion detection with attention-aware pacing and corrective feedback | Cue provenance; disclose suppres-sion; show limits | Fade prompts; retarget errors; adjust pacing | Pause/skip; show all vs minimal; override highlights |

| R2 | Skill Builder | Calibrate + generalise; variability with feedback, not scripting | Ghost tracks and shadow end-states with partial hints; performance analytics with adaptive hinting and controlled variability | Explain feedback basis; show comparison model | Increase task variability; withhold hints; change modality | User-set difficulty; toggle ghosts; consent for perturbations |

| R3 | Coach | Robustness under stress; self-correction; prevent brittle mastery | Fault injection and overlay removal with altered timing; safety/quality monitoring; targeted debrief with fall-back to R1/R2 | Disclose perturbation intent; disclose role/agency shifts | Adjust challenge intensity; adapt thresholds; taper monitoring | Opt-in for stress tests; emergency stop; hand-off confirmation |

| Overlay 2 (Self+): Autonomy support (intermediate-horizon) | ||||||

| R4 | Choice Architect | Shape decision context (salience) while preserving authorship | Lightweight cueing with route salience (alternatives remain se-lectable); multi-criteria filtering; attention weighting with trade-off previews | Mark nudges; link to goals; label optimised criteria | Update weights; fade as user internalises; reduce during load | Opt-out slider; consent for high-stakes; unmudged view |

| R5 | Advisor | Externalise deliberation; make counterfactuals inspectable | Interactive dashboards with side-by-side futures and uncertainty bands; value elicitation; model explanation with alternatives and effect highlights | Expose sources; distinguish evidence vs framing; show unknowns | Tune depth; switch modality; calibrate to time pressure | Editable goals; ask-for-alt; decline reasoning; override defaults |

| R6 | Agentic Worker | Delegated execution under user policy; proposal-approval loop | Plan and execute with XR review checkpoints; plan trace with progress visibility; step confirmation with safe interrupts and rollback | Show intent/plan; audit trail; capability limits; risk disclosure | Adjust frequency by stakes; learn checkpoints; degrade gracefully | Explicit delegation; revoke anytime; re-scope; adjustable autonomy |

| Overlay 3 (Self++): Relatedness & purpose (long-horizon) | ||||||

| R7 | Contextual Interpreter | Legibility of identity/norms + impacts; reduce social surprise | Human vs AI labels and role badges; provenance overlays with impact cards; norm reminders; plural framing for contested topics | Radical disclosure of agent identity, show provenance | Context density tuned to attention; adapt to culture/values | Controls for context appearance; sensitivity sliders; opt-out |

| R8 | Social Facilitator | Improve coordination + repair; increase human-human connection | Shared gaze and participation balance visualisation; micro-clarifications; breakdown detection with viewpoint summaries and perspective-taking prompts | Disclose sensing granularity; explain prompts + thresholds | Do-nothing mode when thriving; calibrate to group norms | Collective opt-in; privacy-by-role; group-negotiable |

| R9 | Purpose Amplifier | Long-horizon value coherence; steer away from disavowed futures | Value-facing simulations with nudges-in-narrative and framing controls; periodic reflections; contestable inferences with governance hooks | Reason + framing legibility; evidence vs narrative separation | Internalisation-focused fading; calibrate identity strength | Contestability; escalation requires opt-in; collective pathways |

XR: extended reality; AI: artificial intelligence.

Overlay 1 (Self): Competence at the sensorimotor timescale (short-horizons). This overlay augments perception and skill, reducing immediate prediction errors in action execution[33].

Mechanistic coupling (SDT-FEP): Competence ↔ minimisation of sensorimotor prediction error. Competence is the subjective experience of a highprecision internal model effectively governing action. When the AI scaffolds skill (e.g., highlighting a target), it reduces the gap between predicted and actual sensory feedback, validating the user’s model of agency[34,95].

Overlay 2 (Self+): Autonomy at the deliberative and situational timescale (intermediate-horizon). This overlay augments cognition and decision-making, helping users navigate complex choices and intermediate goals by reducing strategic uncertainty[32].

Mechanisticcoupling (SDT-FEP): Autonomy ↔ preservation of high-level priors (policy selection). Autonomy reflects the ability to

Overlay 3 (Self++): Relatedness at the developmental and existential timescale (long-horizon). This overlay augments social connection and purpose, steering long-term trajectories and relationships by aligning actions with enduring values and shared social models[96].

Mechanisticcoupling (SDT-FEP): Relatedness ↔ alignment of shared generative models. Relatedness arises from synchronisation of internal models between agents: social connection enables partial offloading of uncertainty onto the group. AI support here minimises “social surprise” (misinterpretation of others) and helps the user remain embedded in a shared communicative web[42,97].

A methodological note on these couplings: SDT and FEP operate at different levels of description; SDT is a motivational theory grounded in decades of experimental psychology, while FEP is a formal account of biological self-organisation rooted in variational inference. The correspondences proposed above (competence ↔ sensorimotor prediction-error minimisation; autonomy ↔

| P | Proposition (what must be true) | Evaluation checks (what to test/measure) |

| P1 | Concurrency: Overlays act concurrently (not a pipeline) and can interfere. | Test overlap interference and recovery: (i) run Overlay 1 guidance while Overlay 2 deliberation UI is present (e.g., motor task + counter-factual dashboard) and measure errors/time-on-task; (ii) measure reclaim-time (time to pause/override after an AI-led phase) and success rate of taking back control. |

| P2 | Timescale Alignment: SDT needs map to uncertainty targets across temporal scales. | Evaluate on the right horizon: Overlay 1 with immediate sensorimotor metrics (errors, collisions, smoothness); Overlay 2 with decision quality and goal-alignment/endorsement (regret, confidence, stated-goal match over days); Overlay 3 with longitudinal drift indicators (relationship repair, wellbeing, dependence, value-consistency) over weeks/months, not only short task scores. |

| P3 | Inspectability: Legitimate augmentation requires an in-spectable, contestable AI voice. | Probe legibility and ownership: users can state what was influenced (evidence, salience, delegation), why, and how to reverse the present intervention. Behavioural test: can users successfully access alternatives, inspect reasons, and undo or suspend the current support? |

| P4 | T.A.N. Scaling: Co-determination strength must scale with scope and initiative. | Audit proportional safeguards: higher-scope interventions in Overlay 3 must provide stronger provenance and incentive disclosure, clearer consent boundaries, broader reversibility, and more complete audit trails than lower-scope Overlay 1 support. Test whether safeguard strength increases appropriately with intervention scope and initiative. |

| P5 | Transition Legibility: Shifts in agency between role patterns must be perceptible and reversible. | Test hand-offs and escalation/de-escalation: users must correctly identify when agency has shifted, who is acting, and under what authority. Measure transition awareness, misattribution rates, and recovery after failed or unwanted hand-offs. |

| P6 | Endorsement over Compliance: autonomy support preserves authorship over revision, not mere compliance. | Check internalisation, not just performance: users endorse outcomes as their choice and can explain “because...” in terms of their goals/values. Compare nudged vs unnudged conditions: if outcomes improve but endorsement drops or users cannot justify choices, autonomy support failed. |

| P7 | Collective Negotiability: Relatedness support requires shared-model alignment and group negotiability. | Verify group legitimacy: collective opt-in for sensing/visualisations; privacy-by-role defaults; and opt-out without social penalty (no status loss, no exclusion cues). Test whether participants can contest aggregation rules/thresholds (e.g., participation metrics) and still collaborate smoothly. |

| P8 | Governance Contestability: Long-horizon alignment is socio-technical and requires contestation pathways. | Audit contestability of action and framing: users and affected groups can challenge not only recommendations but also optimisation targets, interpretive categories, escalation criteria, and institutional defaults. Verify pathways for review, appeal, and collective contestation where communities are affected. |

How to use (self-contained): (1) Map features to Self++ role patterns (R1-R9) across the three concurrently activatable overlays (Table 1); (2) For each claimed role pattern, verify co-determination (T.A.N.) commitments at the required strength (reasons/provenance/incentives; fading/calibration; override/contestability); (3) Evaluate transitions and long-horizon drift under realistic concurrent operation, not only steady-state task performance. Evidential status: Section 7.2 maps each proposition to its current empirical support (direct, indirect, or open hypothesis) and identifies evaluation priorities. SDT: Self-Determination Theory.

Within each overlay, Self++ specifies three role patterns (R1–R9 in total), each realised as an AI role that supports the user under

The overlays should also not be understood as merely coexisting in parallel. In practice, they actively shape one another. Gaining clarity about what one values (Overlay 3) can reveal new skills worth developing (Overlay 1) and reframe choices about how to pursue them (Overlay 2). Conversely, building new competence (Overlay 1) can expand what options feel available in deliberation (Overlay 2) and, over time, reshape identity, commitment, and purpose (Overlay 3). This recursive dynamic of doing, choosing, and becoming means that the self interacting with Self++ at month six is not identical to the self that began at month one. Self++ accommodates this by treating overlays as concurrently activatable and mutually permeable: outputs from one overlay, such as a refined value commitment in Purpose Amplifier (R9), can become updated inputs to another, such as new learning goals for Tutor (R1). An important direction for future work is to investigate this generative cycling empirically, tracing how interventions at one overlay propagate through the others over longitudinal timescales.

Crucially, role patterns act as adaptive scaffolds: as competence, context, and risk change, the system transitions between role patterns or fades support to prevent over-reliance and to preserve human autonomy and relationships[98]. To keep augmentation legitimate rather than covert control, Self++ applies the co-determination principles (T.A.N.) across all overlays:

• Transparency: Sufficient information for accurate mental models of intent, limits, incentives, and uncertainty.

• Adaptivity: Support tuned over time as competence and context evolve (including fading).

• Negotiability: Volition preserved via consent, override, and adjustable autonomy.

T.A.N. requirements strengthen with scope and initiative: higher-overlay role patterns (especially those touching identity, relationships, or long-horizon behaviour) demand stronger transparency and negotiability as safeguards[93,94].

4. Overlay 1 – Foundational Augmentation of the Self (Competence Support)

Overlay 1 targets competence at the sensorimotor timescale: helping users perceive and act reliably in an enriched environment, while keeping early errors and overload low enough for learning to take hold. Self++ does not treat this as a prerequisite pipeline stage. Competence support often runs in parallel with autonomy and relatedness supports (for example, training in teams), but Overlay 1 remains the point where the system most directly shapes perceptual evidence and action feedback.

Mechanistically, Overlay 1 reduces sensorimotor prediction error so users experience effectance and learnable control: attention is guided, actions are constrained into safe steps, and feedback tightens the link between intention and outcome. In SDT terms, this sustains competence by enabling early, attributable successes; in FEP terms, it increases the precision of action-outcome mappings and reduces surprise during control[16,32]. We define three role patterns that mirror established progressions in skill acquisition from novice to proficient performance: Tutor (R1), Skill Builder (R2), and Coach (R3)[99]. As with higher overlays, these role patterns are

4.1 Role pattern R1: Guided familiarisation (AI as Tutor)

At the outset of a new task or environment, novices face high uncertainty because relevant cues, action boundaries, and error consequences are not yet well-modelled. In the Tutor role, the AI adopts a proactive stance that structures the experience into a learnable corridor: it highlights what matters, suppresses what is distracting, and sequences actions so that each step is achievable before the next is introduced. This is classic scaffolding in the Zone of Proximal Development[34], but implemented through in-situ perceptual guidance rather than detached instructions.

In XR, this guidance can be spatial and embodied: key objects or regions can be highlighted, next actions can be indicated with anchored arrows[100] or ghosted exemplars[101], and irrelevant elements can be visually deemphasised to reduce split attention. A practical pattern is step gating: the system reveals only the next required sub-action and advances when completion is detected, which keeps working memory demands bounded. Adaptive AR tutoring systems have operationalised this idea by monitoring tutorial-following status and adjusting the amount and form of guidance in real time[102]. When attention lapses, a Tutor can also regulate pacing through attention-aware playback (for example, pausing or slowing guidance when gaze or location cues indicate the user has fallen out of sync), helping the user recover without compounding errors.

Technically, the Tutor role overlaps with intelligent tutoring systems that use cognitive models to interpret learner actions and deliver context-sensitive feedback (for example, model tracing and related methods in cognitive tutors)[103]. The key difference in XR is that feedback can be embedded directly into the perceptual field, allowing guidance to be shown where and when it is needed rather than translated into verbal rules.

Empirical evidence supports the value of structured, in-situ guidance during early skill acquisition. In assembly-like tasks, AR instructions have been shown to reduce errors and improve performance relative to conventional instruction formats in controlled comparisons[104]. At the same time, the broader literature cautions that AR can either reduce or increase cognitive load depending on design choices, which strengthens the case for tightly scoped, well-timed guidance at R1[105].

Finally, the Tutor role pattern is designed as deliberately temporary for users whose capacity and goals support progression: as soon as the user demonstrates stable performance on a step, guidance should begin to fade (fewer cues, larger action windows, more

4.2 Role pattern R2: Scaffolded practice (AI as skill builder)

Once the user can complete the basic sequence under guided familiarisation, the AI shifts into the Skill Builder role pattern that prioritises practice, calibration, and generalisation. The support envelope deliberately widens: the system provides partial cues and performance feedback but stops prescribing every micro-action. The intent is to refine the user’s sensorimotor predictions while avoiding the brittleness that comes from rehearsing a single, fixed script. Motor-learning theory predicts that variability and appropriately structured interference during practice can improve transfer and retention, even if acquisition feels harder[108,109].

A hallmark of R2 is augmented feedback that keeps “what good looks like” visible while leaving execution to the user. Two common XR patterns are Ghost Tracks, which overlay time-aligned expert motion for in-situ trajectory and timing matching[17,110-113], and Shadow Workspaces, which anchor a target end-state silhouette (“shadow of success”) to support precise pose, placement, or orientation[17,105,114,115]. Together, they externalise comparison and reduce cognitive load during repeated practice while preserving active control.

Although these cues are most natural for 3D sensorimotor tasks, the underlying principle generalises: externalised reference structure reduces internal memory and computation by making intermediate steps, trajectories, or goal states inspectable[8]. In

Crucially, R2 also introduces controlled challenge. Rather than maximising ease, the system should keep the task in a learnable difficulty band by gradually withholding hints, expanding acceptable action ranges, and introducing mild perturbations (for example, small changes in order, timing constraints, or plausible micro-faults) so the user learns to adapt rather than imitate. This “challenge just beyond current mastery” is consistent with the challenge-skill balance emphasised in flow-oriented accounts of engagement and growth[119]. It also parallels curriculum ideas from machine learning, where a teacher proposes goals that are increasingly difficult but achievable, as in AMIGo[120]. In Self++, the Skill Builder role pattern therefore balances error reduction with productive difficulty: enough structure to prevent unproductive surprise, enough freedom and variability to build robust competence. By the end of R2, the user should rely on Ghost and Shadow cues primarily for fine-tuning, while completing substantial portions of the task without explicit step-by-step prompting.

However, Self++ does not assume that all users will or should progress beyond R2. For individuals whose capacities, contexts, or preferences make sustained scaffolding the appropriate endpoint, including many users with disabilities who experience assistive technologies as extensions of self rather than temporary supports, remaining at R2 long term is a valid, competence-affirming outcome. What matters is whether the level of support is aligned with the user’s endorsed goals and current capacity, not whether it matches an externally imposed trajectory toward independence.

4.3 Role pattern R3: Mastery and resilience (AI as coach)

Once the user is reliably proficient in routine conditions, the AI transitions to the Coach role pattern focused on robustness, adaptability, and self-correction. Guidance recedes: instead of persistent highlights or continuous overlays, the Coach monitors performance and introduces controlled perturbations to test whether the skill generalises beyond rehearsed cases. This deliberate use of “desirable difficulties” supports more durable, flexible learning than perfectly predictable practice[121,122] and matches accounts of expertise that emphasise deliberate, feedback-rich refinement over time[123].

In practice, the Coach varies scenarios, injects plausible faults, and occasionally withholds support (for example, removing an overlay or altering timing constraints) to expose brittle assumptions and reveal blind spots. It intervenes only when performance drops below a safety or quality threshold, preventing the consolidation of poor habits while keeping the user responsible for recovery and strategy. After each episode, the Coach provides a brief debrief and, if needed, temporarily reverts to Tutor or Skill Builder to remediate a specific sub-skill. In Self++ terms, R3 consolidates competence by reducing “surprise under stress”: the user learns not only to execute correctly, but to remain stable when conditions deviate from expectation[122].

R3 also manages role transitions in team settings, so the user retains a coherent model of who is doing what. Abrupt hand-offs, silent autonomy shifts, or ambiguous identities can trigger mode confusion and automation surprise, especially in off-nominal

By the end of R3, the user should display functional mastery: resilient performance across varied conditions, recovery from errors without constant prompting, and correctly calibrated trust in the coach as a safety net rather than a crutch.

5. Overlay 2 – Cognitive and Strategic Augmentation (Autonomy Support)

Overlay 2 shifts emphasis from executing skills to forming and revising policies: choosing goals, weighing trade-offs, and allocating attention and effort over time. Self++ does not treat the three overlays as a strict pipeline. Autonomy support often appears during competence building: even in training, learners must make meaningful choices (what to try next, when to speed up, whether to accept risk, when to request help) in order to demonstrate genuine competence. Accordingly, Overlay 2 can run concurrently with Overlay 1: the system may coach sensorimotor execution while also shaping the user’s decision context so choices remain aligned with the user’s own values and intentions.

This concurrent view matches mixed-initiative and adjustable-autonomy systems, where initiative and control shift fluidly between human and agent depending on task demands, user state, and risk, rather than advancing through fixed stages[93,94,129]. Mechanistically, Overlay 2 targets autonomy as policy selection: in SDT, autonomy is experienced as self-endorsed action[32]; in FEP terms, this corresponds to protecting high-level priors (values and goals) while using prediction to reduce uncertainty about consequences[16]. In Self++ terms, Overlay 2 is co-determination expressed at the cognitive timescale: a second voice that helps the user reflect, anticipate outcomes, and surface trade-offs, but does not smuggle in new goals or override the user’s higher-order commitments. This caution is reinforced by evidence that synthetic persuasion evaluations can diverge from human outcomes[20,130].

A key autonomy risk in modern ecosystems is that choice environments are routinely shaped by opaque recommendation logic, engagement optimisation, and dark-pattern design, steering behaviour while eroding the user’s sense of authorship[131,132]. Self++, therefore, requires decision support to remain co-determined: (i) legible enough for users to judge how the system is weighing attention and effort, (ii) responsive to changing goals and context, and (iii) subject to consent, override, and adjustable autonomy. This reflects long-standing guidance that automation should act as a collaborative partner rather than an invisible controller[22,87].

We define three role patterns in this overlay as Choice Architect (R4), Advisor (R5), and Agentic Worker (R6), reflecting increasing initiative in shaping the decision environment, explaining trade-offs, and executing actions, but always under user oversight, reversibility, and the co-determination principles (T.A.N.) introduced earlier.

5.1 Role pattern R4: Subtle guidance in choice (AI as choice architect)

At R4, the AI begins to shape the decision context rather than the decision itself. As a Choice Architect, it uses small changes in salience and friction to make goal-consistent options easier to notice and compare while leaving selection entirely with the user. This draws on classic choice architecture and nudging, but under a stricter co-determination constraint: the system may guide attention, but must not covertly redirect goals or exploit vulnerabilities[133-135]. In XR, this can be enacted through lightweight perceptual cueing, for example, gently highlighting items that match the user’s stated dietary goal in an AR aisle[56], or rendering a user-preferred route as more visually salient via in-view AR guidance while leaving all alternatives selectable[136,137].

Mechanistically, this role pattern operates by re-weighting attentional evidence: the interface makes some cues more precise (more noticeable, easier to act on) so that acting on existing intentions requires less search and self-control. Because the same mechanism can become manipulation, R4 should be treated as scaffolding for autonomy, not behaviour steering. Self++ therefore binds Choice Architect nudges to co-determination principles (T.A.N.) safeguards: Transparency that the highlight is system-generated and why, Adaptivity that tracks the user’s changing priorities rather than a single platform metric, and Negotiability through opt-out, adjustable strength, and consent for high-stakes nudges[134,138]. These safeguards are especially important when R4 is running concurrently with Overlay 1 coaching, because the learner’s heightened reliance and reduced situational bandwidth can otherwise make helpful layout indistinguishable from hidden coercion.

Finally, implementing Choice Architect support requires multi-objective reasoning: most real decisions trade off plural values (cost, safety, enjoyment, time), so the system should represent trade-offs and let the user steer weights rather than collapsing everything into an opaque score[139]. In this way, R4 reduces decision friction and strategic uncertainty while preserving experienced authorship: the user can always recognise, contest, and revise how the system is shaping the field of choice.

This design stance also clarifies the ethical status of nudging within R4. The nudge debate has shown that whether a nudge is manipulative depends less on the inevitability of framing decisions and more on the mechanisms by which the nudging occurs and whether the direction of influence is transparent to the target[48,134,135]. Self++ resolves this tension procedurally: every nudge in R4 must be transparently marked as system-generated and linked to the user’s own stated goals, adaptively tuned to changing priorities rather than a fixed platform metric, and negotiable through opt-out, adjustable strength, and consent gates for high-stakes choices

5.2 Role pattern R5: Informed deliberation (AI as advisor)

Where R4 shapes the choice environment, R5 externalises the deliberation itself. The AI becomes an Advisor: a conversational analyst that helps the user surface assumptions, compare futures, and reason through trade-offs, while keeping policy selection and endorsement with the user[87,140]. This role pattern is especially important in contexts where persuasive optimisation can outperform genuine behaviour change in simulation but fail to translate into durable, owned decisions in the real world[20,130]. In Self++, the Advisor is designed to feel like a co-determining voice that sharpens reflection rather than a persuader that steers outcomes.

Concretely, the Advisor provides interactive evidence and counterfactuals rather than a single “best” answer. It can assemble an XR dashboard that contrasts options across the user’s stated criteria (for example, work-life balance, skill growth, risk, and social commitments), and allow the user to interrogate “why” and “what if” in place[87,141]. A “day in the life” walkthrough, uncertainty bands, or side-by-side consequence traces can make long-horizon implications more legible without collapsing plural values into one score. The Advisor can also act as a memory and consistency check (“you previously prioritised family time”), and make

R5, therefore, targets autonomy in its stronger sense: informed self-endorsement. It reduces “decision entropy” by illuminating unknowns and disagreements between objectives, but it must do so in line with the co-determination principles (T.A.N.). Transparency requires surfacing data provenance, assumptions, and uncertainty (and what the model cannot know). Adaptivity requires tuning explanation depth and modality to the user’s expertise and momentary cognitive load. Negotiability requires editable goals, weights, and constraints, plus the ability to decline lines of reasoning, request alternatives, and override defaults. Together, these safeguards keep the Advisor supportive, legible, and revisable, so the user remains the author of the decision even when the AI is doing substantial analytic work[47,87,140].

5.3 Role pattern R6: Empowered delegation (AI as agentic worker)

If R5 externalises deliberation, R6 externalises execution. Here, the AI becomes an Agentic Worker: it carries out well-scoped tasks on the user’s behalf while remaining subordinate to the user’s intent and oversight[87,143]. The user delegates an outcome (and constraints), the AI proposes an executable plan, and the pair iterates until the plan is endorsed. This preserves autonomy because the AI’s agency is not an independent authority, but an operational extension of the user’s chosen policy.

Because delegation increases the risk of out-of-the-loop failures, complacency, and automation surprise, R6 requires explicit safeguards[144,145]. Precisely, the Agentic Worker should operate as a proposal-approval loop: it presents what it intends to do (steps, assumptions, dependencies, and uncertainty), requests confirmation at appropriate checkpoints, and remains interruptible throughout[87,146]. Intermediate autonomy is preferred over set-and-forget automation: maintaining user involvement at key junctures supports situation awareness and improves recovery when the environment deviates from expectations[145,147].

Self++ implements these safeguards through the co-determination principles (T.A.N.). Transparency means the AI makes its intent, limits, and current authority legible (what it is doing, why, and what could go wrong). Adaptivity means autonomy is adjustable and can be tightened or loosened as the user’s confidence, task criticality, and context change (for example, more confirmations for novel or high-stakes steps). Negotiability means delegation is always explicit, revocable, and renegotiable: the user can override, pause, or re-scope the task at any time, and the AI treats corrections as first-class inputs rather than friction[87,148]. This keeps the system aligned with the user’s values while reducing the need for persuasion; behaviour change is owned by the user because action follows endorsement, not covert steering[20,130].

At the end of R6, Overlay 2 reaches its apex: the user experiences augmented autonomy in the strict sense; they remain the author of goals and approvals, while the AI reliably executes across tools and contexts with minimal cognitive burden[87,143]. The result is higher throughput without surrendering control: autonomy is strengthened through delegation that is transparent, adjustable, and always negotiable[144].

6. Overlay 3 – Societal and Existential Augmentation (Relatedness and Purpose)

Overlay 3 moves into the most aspirational domain of augmented agency: supporting relatedness, cultural embeddedness, and

Mechanistically, Overlay 3 targets uncertainty at the level of shared generative models. Teams and communities function best when participants converge on shared mental models, a mutual understanding of “what is going on” and “who is responsible for

Accordingly, Overlay 3 defines three role patterns mapping to R7–R9: Contextual Interpreter (making identity, norms, and downstream impacts legible to prevent social surprise); Social Facilitator (nurturing shared understanding and constructive conflict repair); Purpose Amplifier (supporting value-aligned self-regulation and life coherence to prevent value drift)[139,143]. Because these role patterns touch the core of identity, co-determination is non-negotiable. The system must act as a user-legible partner, not a hidden governor. We therefore apply the co-determination principles (T.A.N.) as a hard constraint, requiring explicit transparency and negotiability whenever the system intervenes in relationships, values, or civic judgment[87,130].

6.1 Role pattern R7: Big-picture contextualisation (AI as contextual interpreter)

R7 addresses a recurring failure mode of hybrid XR–AI settings: people can act locally (and fluently) while lacking context about identities, roles, norms, provenance, and downstream consequences. The Contextual Interpreter augments the user with situational and value-relevant legibility across two fronts: it surfaces information that may carry ethical, social, or practical significance for the user, without presupposing which normative framework applies. What counts as value-relevant is shaped by user configuration, cultural context, and the co-determination principles (T.A.N.), ensuring that context augmentation functions as epistemic support, expanding what the user can notice and anticipate, rather than as moral instruction.

On the social side, the Interpreter enforces identity and role clarity in mixed human–AI ecologies. In XR meetings or co-learning scenarios, it should make agent identity and function legible (for example, persistent labels or outlines that distinguish humans from AI agents and indicate the current role, such as “AI facilitator” or “human lead”). This is not cosmetic: disclosure cues help users calibrate expectations and preserve trust when agency shifts, including hand-offs structured via HAT Swapping[19]. Evidence from AI service contexts suggests that identity disclosure can measurably shape user trust and uptake, reinforcing the need for explicit signalling rather than ambiguity[153]. Beyond identity, the Interpreter can recover social signals that are weakened in mediated interaction (for example, shared gaze or attention cues), supporting mutual awareness and coordination[97,154].

On the world side, the Interpreter bridges micro-actions to macro-consequences without coercion. It can surface value-relevant context that would otherwise be invisible at the moment of choice (for example, lifecycle or stakeholder impacts, long-horizon

Co-determination requirements are strongest in R7. Transparency requires identity disclosure, provenance cues, and uncertainty communication; Adaptivity requires tuning context density to attention and stakes (and backing off when low-value); and Negotiability requires user control over what contexts are surfaced, when, and at what sensitivity, including opt-out and override. Together, these safeguards ensure that context augmentation functions as user-aligned sensemaking support rather than covert social steering[20,87].

6.2 Role pattern R8: Facilitating social connection (AI as social facilitator)

R8 moves from making context legible (R7) to actively improving how people relate and collaborate. Because real-world work, in classrooms, multidisciplinary teams, or cross-cultural communities, is inherently social, this role pattern often runs in parallel with competence-building (R1–R3) and autonomy support (R4–R6). Here, the AI acts not as a private companion, but as a light-touch facilitator that strengthens human-to-human coordination. By using XR to surface otherwise-missed social signals, it reduces the small misunderstandings that typically accumulate into conflict[96,154].

The Social Facilitator builds shared mental models by restoring the attentional and intent signals often lost in mediated interaction. XR research demonstrates that cues such as gaze visualisation and mixed-reality communication markers can significantly improve grounding and social presence[57,155,156]. The Facilitator extends this by visualising group dynamics, such as participation balance or conversational rhythm, allowing teams to self-correct without a human moderator[79,157]. In fast-moving or jargon-heavy environments, the AI maintains common ground through optional micro-clarifications and role reminders, ensuring that shared understanding is actively supported rather than merely assumed[24].

Where friction arises, the AI defaults to process support, summarising viewpoints and prompting perspective-taking, rather than adjudicating outcomes. This focus on conversational flow is critical, as fast responsiveness is tightly linked to felt connection[158]. Crucially, Self++ treats R8 as explicitly pro-social: it aims to increase human-to-human contact rather than becoming the user’s primary relationship. While AI companions can reduce loneliness by making users feel “heard”[159], they also pose a risk of social drift toward synthetic companionship[98]. The Social Facilitator mitigates this by preferentially scaffolding real-world relationships, inviting others in and encouraging repair after ruptures, and fading its own presence as human ties strengthen.

Because R8 touches group power and identity, it must be constrained by the co-determination principles (T.A.N.). Transparency requires clarity about what social signals are being sensed (e.g., “is the AI tracking my tone?”) and how feedback cues are generated. Adaptivity requires that the system can “read the room” and enter a do-nothing state when the group is thriving, and intervention would be intrusive. Negotiability must be socially contextualised, moving beyond individual consent to collective agreement. Specifically, Collective Negotiability should offer: Mutual opt-in, shared visualisations (like participation heatmaps) only appear if all members consent; Privacy-by-role, allowing individuals to opt out of certain group metrics without social penalty; and Adjustable mediation, enabling the group to negotiate the facilitation sensitivity, deciding, for example, whether the AI should flag interruptions or stay silent during heated creative debates. By situating negotiability within the group, the AI remains a tool for team

6.3 Role pattern R9: Aligning life and values (AI as purpose amplifier)

R9 is the most delicate form of augmentation: the AI supports the user in living consistently with their self-endorsed values over long horizons, closing the gap between “the life I intend” and “the life I drift into”. This targets long-timescale misalignment (chronic regret, value drift, attention capture) that can accumulate into “existential surprise”. In SDT terms, the aim is not compliance but sustained autonomous self-regulation, where behaviour is owned and integrated rather than externally pressured[32,160]. In FEP terms, the Purpose Amplifier helps the user maintain stable high-level priors (values and identity commitments) while flexibly updating